Following on from my last post regarding Tips and Tricks to Make Tableau a Success in Your Company, it feels like a good place to expand on this and demonstrate some of the best practices that I have picked up from delivering Tableau Projects and providing Tableau training in London.

Tableau Training & Project Management Tips

I think that first and foremost, it’s a good disclaimer that I have experienced both very successful and somewhat difficult projects throughout my time providing Tableau training with TAP CXM, and I have taken a lot of learnings away from them.

I’m going to take us through some points below and we will explore some key aspects that I think will assist you with ensuring that your BI project is as successful as it can be.

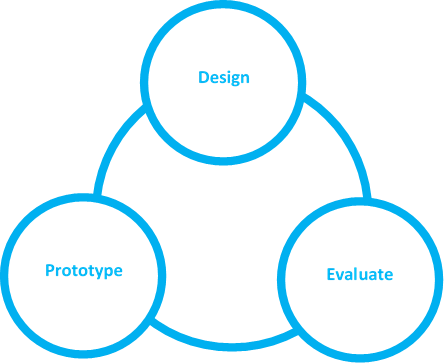

Iterative Design

As we explored in the previous post, Tableau is still a relatively new concept in many organisations. Making big changes all at once tends to be met with greater resistance. It’s imperative that we grow Tableau organically and allow the excitement of the product offering to spread amongst the key stakeholders.

Not even the best Tableau Dashboard designers out there will always produce the most insightful dashboards on a first attempt. In this respect, Tableau development lends itself to iterative development to ensure that the end user is completely satisfied.

I have found the best products that I have produced at TAP with our BI team have come to life through multiple short sprints with constant communication with the end user. There is a mutual understanding with our clients that we are continually developing their Tableau product rather than aiming for ‘finished article’. We find that certain projects will naturally come to an end as further development doesn’t feel it will add value, and this is something we at TAP CXM emphasize during Tableau training.

With iterative design, it is far easier to react to changes and improvements that an end user would like to see. This in my opinion has the greatest added benefit of any Tableau project, in maintaining a high level of engagement in Tableau from its end users.

If there is a constant stream of improvements and new features, it is likely to maintain a higher engagement over a product which remains static for months on end. If people within the organisation stop using Tableau, it will be harder to encourage further growth of it.

To support iterative design and a short sprint focused working environment, Agile and SCRUM compliment Tableau project delivery and are an important part of Tableau training in London.

Know What’s in Your Toolbelt

There is an old Russian proverb that translates loosely to ‘You cannot break a wall with your forehead’. In essence, make sure you use the right tool for the job.

New software is often used to try and solve every requirement, simply because it’s the new kid on the block and likely, a lot of budget has been invested in it. However, building a solution in a tool that wasn’t designed to accommodate it, is not the best course of action in justifying spend. It’s likely to result in an inferior product.

This is no different for Tableau. However it is fundamentally important to understand early what is great about the platform, which is why we provide Tableau training across London to help teach people its strengths and weaknesses. The best middle ground I have found is to assess what the risks are to utilise a different tool besides Tableau against the cost in the time and development of using Tableau for something it isn’t designed for.

In a recent project, we have altered Tableau workbook XML’s to allow us to display certain dashboards in a traditional Excel like fashion. This was to serve a specific purpose and one that would not be improved by attempting to adopt a new approach using either a different tool, or changing the requirements to fit Tableau. The perceived benefit of having this function within our Tableau solution, where an aging customer base could go to a single place to get all the tools they needed, far outweighed the cost of development within Tableau.

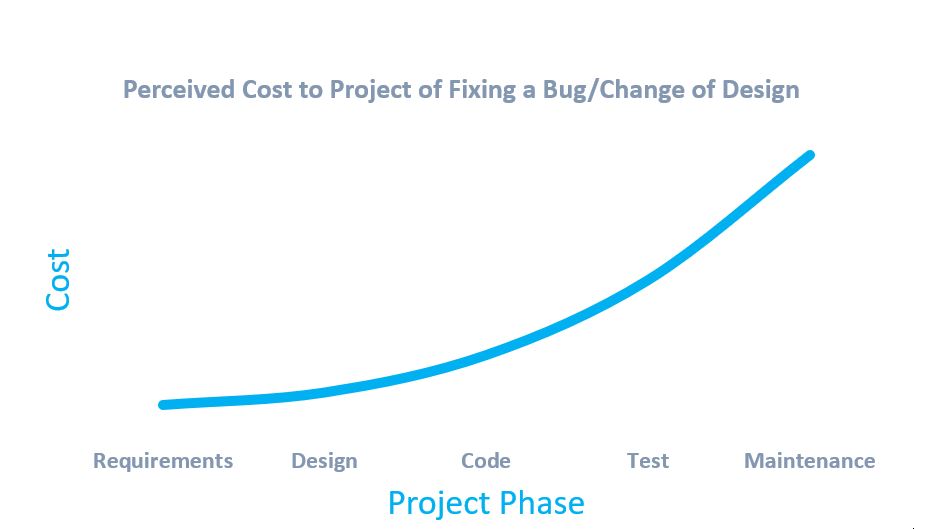

Model Ahead

A common occurrence in our Tableau projects is that we deliver ETL and Data Modelling solutions alongside a BI solution, as well as Tableau training. As such we are working closely with our data modelling team in a space where the finished data model that Tableau will ingest to produce its Dashboards is not ready for Tableau to connect to, or in some cases, does not exist.

Rather than losing development time, waiting for the final model to be fully produced and populated, we often work closely with our data modellers to understand what shape our data needs to be in to make the Tableau offering as efficient and easy to build as possible.

As such, we often build placeholder models outside of the development environment that directly mirror what we expect to see in the final model build. This could be as simple as dummy Excel workbooks, mirroring the structure; to develop SQL tables, populated with test data. You can learn more about this approach through our Tableau training.

The real benefits to this are quite clear. Firstly you can commence BI development without needing to wait for the data to be ready. Secondly, it allows the BI schema within Tableau to be tested early to ensure it will deliver what it needs to do. As Tableau schemas become more and more complex, we find more data points we may need to support the visualisations we wish to create.

Normally, this means that the supporting data model will require further iteration. However, as the data model isn’t a mature product, it is far more time and cost efficient to remodel it in the design phase, rather than after it has been developed, tested and released.

Tableau’s ‘replace references’ feature can be very useful for any data points that are different from the original specification.

Comparing Apples and Oranges

We likely have all experienced this in the past and I can atone personally for the number of hours spent exploring data to investigate why two reporting numbers are different, when they should match. It nearly always is caused by separate data models, but we go into this in more detail with Tableau training in London.

A side effect that I tend to see with Tableau, due to its ability to connect to a multitude of data sources, is that it is used either in parallel or in place of a data ETL tool and data model. This, if not carefully marshalled can end up causing the same effect above, which most organisations are spending a lot of money on trying to eradicate.

It is quite common that clients will question whether we can use Tableau to digest information directly, as they will tend to end up with a complete product much faster.

In earlier projects, I was quite open to doing this, but in time I have learned that for long term project quality, it is best to draw a line here.

Data which is anonymised, aggregated or does not compliment your data model (i.e. there is no way to link to a Customer/Product/SKU), when providing Tableau training, I would advise is an acceptable data source to connect directly via the platform. An example could include Google Analytics web data.

There is little benefit to us including this within a customer level data model as we cannot match it on an individual level. We would essentially have a set of tables within the model that do not relate to the rest of it, creating technical debt on the infrastructure.

If a requirement however includes data which could form a link, such as a ‘Staff Training’ document held as an Excel Workbook, this has the potential to add value to an individual level data model and more importantly, prevent the emergence of a second data model that is not governed by the same rules created within our main one.

Despite Tableau having the ability to connect to many different data sources simultaneously, it does not mean you should force yourself to use it. During training, we highlight that Tableau is typically a much better end product when working from a clean, efficient data source that has been designed specifically for Tableau to sit on top of. The benefits of increased viz rendering performance and/or extract performance are a worthy trade-off for the extra ETL development time, and this benefit is only going to increase as your data model scales up in size over time.

Summary

Tableau projects can be very exciting to deliver. Many clients will be seeing data presented for the first time in such an interactive manner and it’s a great feeling when you see them enjoy a new Tableau dashboard, as much as a child opening a Christmas present.

I feel that it’s our role as the delivery tool of this technology to ensure organisations we work with continue to feel inspired and maintain a high level of interest and engagement with Tableau. I believe that following a few of the steps above, will really assist you in doing so and ensuring your customer gets the best service possible. You can also enquire about Tableau training in London for even deeper advice and insights.

Read more about Tableau and Bi here.